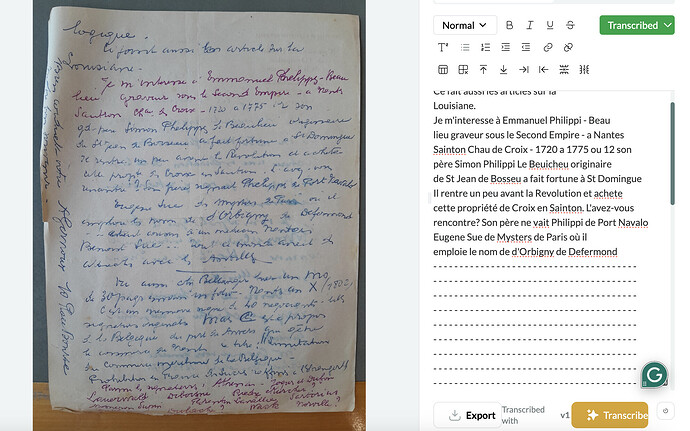

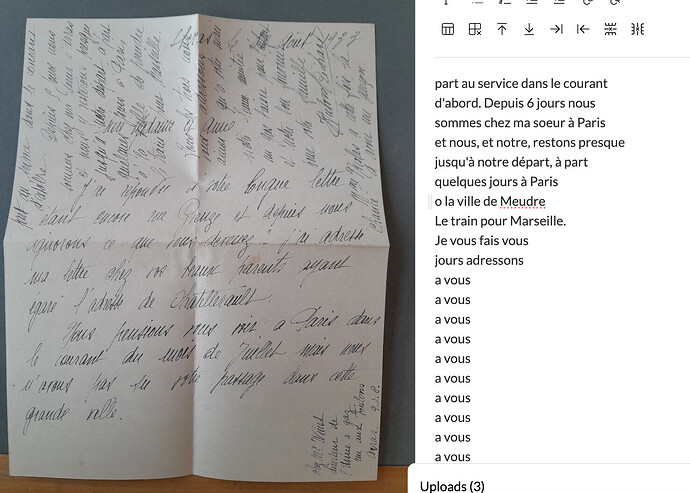

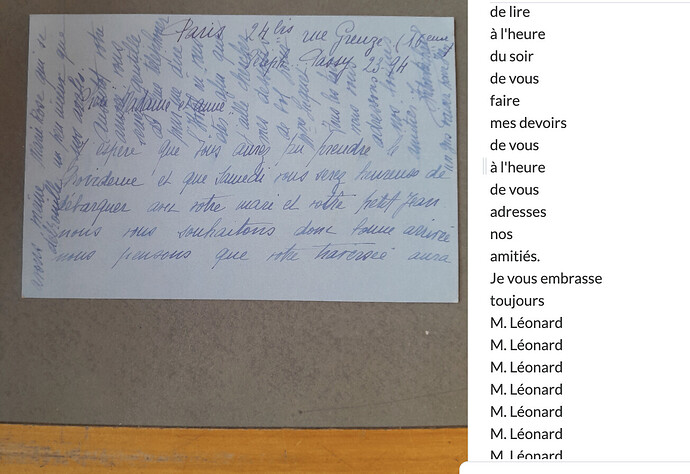

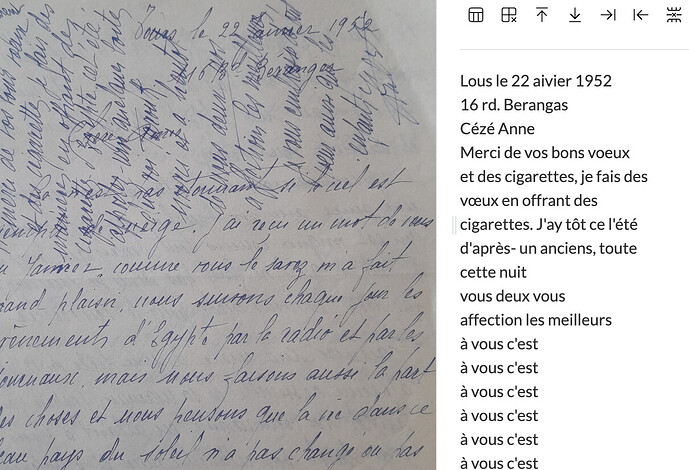

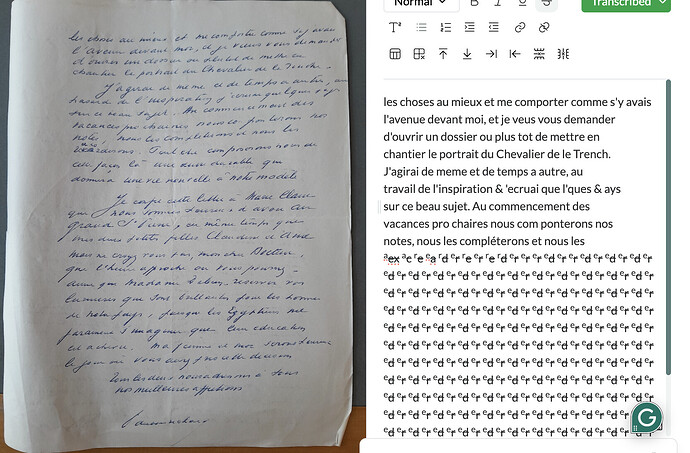

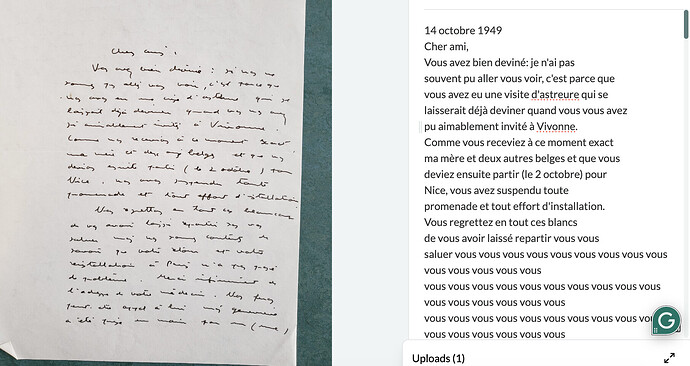

I haven’t figured out why the doom loop is happening but I’ve started to screenshot the documents where it happens. I think part of it is when there is overlapping text (screenshots 2,3 and 4) it can be hard know where it should transcribe first?

Here’s another example. I was wondering if maybe there is a way to code the program so that if you have the same thing like 17+ times in a row, it will instead say , and that would be easier to locate and trouble shoot within the program and also for the user.

1 Like

Thanks for this Paul. The general plan to fix this issue is to train Leo on more data and for longer, when we have access to more computing power. We also plan to allow users to fine tune the model, which would probably fix the issue for a relatively consistent dataset like yours. See here.