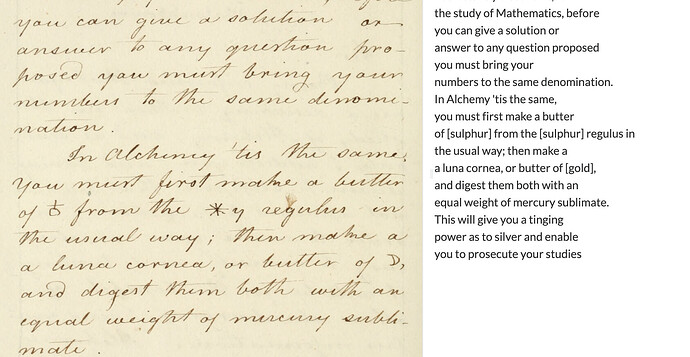

I just discovered a treasure trove of alchemical manuscripts from the early 19th century at the Getty (available on Hathitrust) and I’m starting to download and transcribe them. Of course, they are chock-full of alchemical symbols, which I assumed I would have to go back and correct after Leo did something unpredictable with them. What Leo has done (so far) is actually pretty interesting! He figured out they represented different chemicals, but went 0 for 3 in getting the chemicals right: sulphur instead of antimony/mercury and gold instead of silver. But it’s cool that he made the leap to chemicals!

Oh wow, that’s very cool. Had no idea Leo had learned to do that—hopefully he’ll will get better as he sees more examples of this kind of material. Thanks for sharing!

Yes – this is certainly a case where the user could help in the training. I have both an update (to help you suss out what Leo knows and doesn’t know) and a question. First: Leo is very good on gold! More than 50% correct for a circle with a dot in the middle. So he clearly picked that up somewhere. Otherwise, he generally opts for “sulphur” (oddly, spelled in the 19th century version)for almost all other symbols, which sometimes works, in the same sense that a student who picks the same answer on a multiple choice test with 15 options gets an F. But he did also correctly pick “water” once, and the occasional mercury. So at least he’s trying…

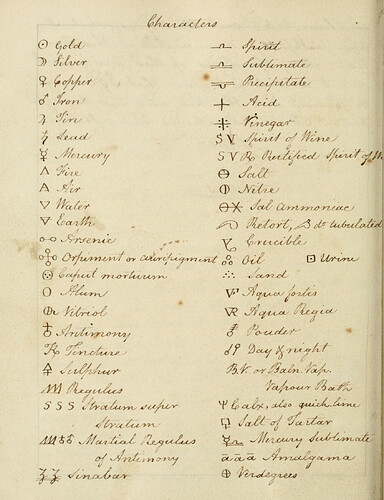

My question concerns how this would work in terms of user input. I could obviously correct Leo online, as we discussed earlier; but the ideal solution in this case (since almost everyone had their own unique set of symbols) would be to ask Leo to scan a chart with translations and learn from that. In this case, my alchemist conveniently included one (see below). Would this work? If so, I foresee a great marketing pitch for the dozens of people who work on alchemy and must go crazy with all the symbols…

This is a very interesting question. It seems as though, at least in theory, it should be possible for the user to provide Leo with a legend like this. In the past, Jack and I have discussed what kinds of context users might be able to provide to improve transcription quality or customize specific aspects of the output.

This particular case is more complex, because instead of just feeding the model plain text, we’d be asking it to use one image (the legend) to interpret another (the alchemical manuscript). Transformer models, even multimodal ones, are typically trained on text or image–text pairs, not on image–image tasks. So they’re not very good at this kind of visual cross-referencing, at least out of the box. To make it work, the model would need to visually match unfamiliar symbols in one image to those in another, understand their spatial layout, and apply that mapping consistently. That’d likely require a lot of specialized training data to do reliably.

That said, there may be a smarter or more constrained way to tackle this, and it’s worth thinking about, especially since similar challenges come up in use cases like astrological charts, shorthand systems, logic notation, or cipher keys.

We’ll also be introducing fine-tuning features soon, which offer a more technically straightforward solution. In that case, the user could correct a few transcriptions, making sure each symbol corresponded to the right element, and then use those examples to refine the model.

Hope that all makes sense and thanks for raising such an intriguing question!

Yes – very helpful! Just to clarify—before I found this chart I was using this one from Wikipedia, which included most of the same symbols my alchemist used, with images that I imagine would be close enough for Leo to recognize “in the wild.” Would this cleaner set of images be an improvement, or is it basically an “image” as opposed to text problem? It does sound like correcting as I go would be the most straightforward, though more work up front for me-- and may not be worth the candle depending on how many pages need to be transcribed (in this case it’s 200 or so, so I’m holding off on the image-heavy ones in case something works).

Here’s the link:

Since there are a lot of “this” or “that” options, the user would have to edit this list to correspond with what’s being used in the ms.

PS – I figured out that by “image-image” you meant that my alchemist’s “translation” was an image. So perhaps this sort of thing (an available-online legend with image + Text, adapted by the user to match what the ms is actually up to) would work pretty well?

Possibly! I think the online legend (image + text) to transcribe a manuscript (image) would still require the kind of image-image inference I mentioned. This is definitely the kind of thing we want to think about as the product matures but it would require overhauling how the model works in quite a significant way.

The eventual plan is to increase the number of options for both the inputs (so instead of just an image, the user could input an image plus custom text (with contextualisation, metadata, etc., or possibly also multiple images for a single transcription) as well as the outputs (to choose what formatting to preserve, whether it should be a semi-diplomatic, diplomatic, or modernized transcription; also to use the generated transcript to produce other outputs, e.g. translations, summaries, etc.)

Before we begin introducing these more advanced functions, our current priority is to ensure that the core functionality works correctly. But while not on the immediate horizon it’s extremely useful to know that there is demand for this kind of feature as we plan next steps!

Okay! All good. The fact that Leo (usually) includes some sort of chemical in bracket at least makes it easy enough to go through and correct them.